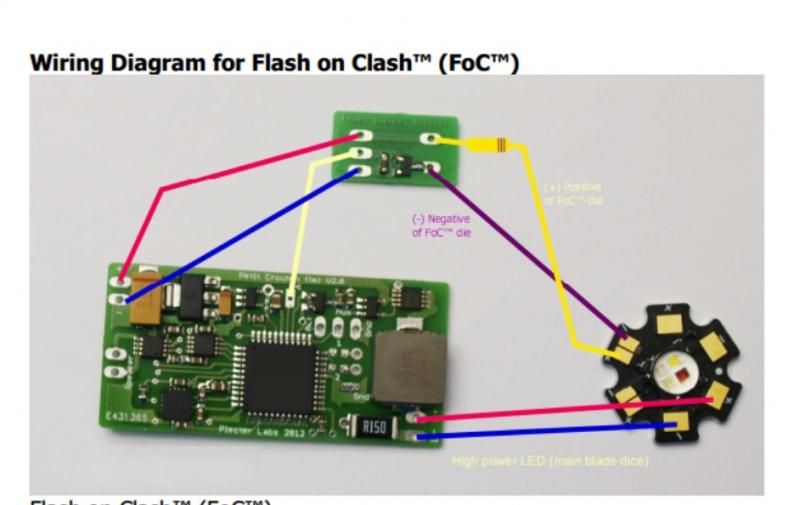

I am currently planning wiring for a probable future project involving a Red/Red/White Luxeon Tri-Rebel Star powered by a PC v3. I would just like to run this by those of you with more experience to make sure I don't make any silly mistakes.

The plan is to use the white LED as a single flash-on-clash colour, run off the 3.3V FoC pad. Working out the resistor for the single LED is straight-forward, I just need to source a SMD 2512 type resistor 1ohm/at least 2W. Correct?

I then want to run the two red LEDs in series as the main blade colour (powered using the main high-power LED pads on the PC in conjunction with a 185650 7.4V pack). This is where I'm a little less certain.

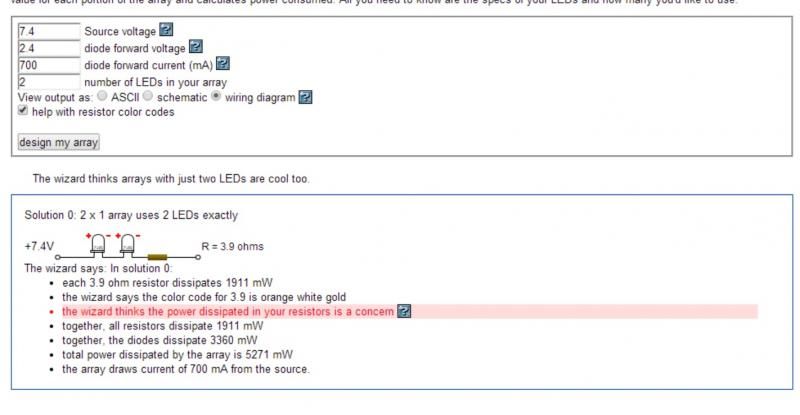

The forward voltage of the red LEDs is 2.4V and they are to be run at 700mA. I understand that, in order to work out the required resistor for a series circuit, I need to add the combined voltage of the two LEDs as my forward voltage in the equation, i.e. 2.4 + 2.4 = 4.8 V.

So, I have the following formula:

(7.4V - 4.8V) / 700mA = 3.7142 (or 3.9ohm)

Is this correct?

I also tried the calculator wizard on this and it seems that it presumes my LEDs all have the same forward voltage and adds up the voltages for me. This is what it's telling me ...

With regards to chosing the appropriate wattage for the resistor, what is important? Is it the power dissipated by the LEDs or the total power dissipated by the LEDs and the resistor?

It's not that crucial I don't suppose, as I can just use a 3.9ohm / 10W resistor and it will cover it all. But it wouldn't harm to try and understand it for future reference. ("Electronics for Dummies" hasn't arrived yet)

And, why is the wizard telling me it is concerned about the power disspiated in the resistors? Do I need to worry about that?

Reply With Quote

Reply With Quote

Bookmarks